Medical image annotation is the meticulous process of labeling medical scans like X-rays, MRIs, and CT scans to teach artificial intelligence how to identify anatomical structures and diseases. It's similar to a seasoned radiologist pointing out subtle details in a scan to a medical student, only the student is an AI model. This process transforms raw visual data into structured, intelligent information that machine learning algorithms can learn from.

The Foundation of Trustworthy Medical AI

At its heart, medical image annotation is the bridge connecting human expertise and machine intelligence. It is the essential first step that enables AI to help clinicians diagnose diseases, plan treatments, and predict patient outcomes with greater accuracy and speed. Without precise, high quality annotations, an AI model learns from a flawed textbook, which can lead to unreliable and even dangerous results.

This is far more than drawing boxes or lines on a screen. It demands deep medical knowledge to differentiate between healthy tissue and a subtle abnormality, such as a tiny lung nodule or the earliest signs of diabetic retinopathy. The quality of this foundational data directly shapes the AI's performance, making expert led annotation a non negotiable part of any serious medical AI project.

Why Quality Annotation Matters

The impact of high quality annotation is not just theoretical; it is measurable and significant. By feeding AI clean, accurately labeled data, healthcare organizations can achieve measurable improvements:

- Improve Diagnostic Accuracy: Train models to detect anomalies that the human eye might miss, reducing false negatives and positives. For example, AI models trained on precisely annotated mammograms have shown the potential to identify early stage breast cancer with higher sensitivity than human readers alone.

- Accelerate Clinical Workflows: Automate routine tasks like measuring organ volume or tracking tumor growth, freeing up specialists like radiologists and pathologists to focus on the most complex cases. This can reduce report turnaround times by up to 40% in some radiology departments.

- Enable Predictive Medicine: Build algorithms capable of forecasting disease progression or predicting a patient's response to treatment by recognizing subtle patterns in medical images invisible to the naked eye.

- Enhance Surgical Planning: Generate precise 3D models from annotated scans, providing surgeons with a detailed digital twin to plan complex procedures with greater confidence and accuracy.

The meticulous process of medical image annotation grounds AI in clinical reality. It ensures that algorithms are not just recognizing pixels, but are learning to see with the discerning eye of a medical expert, which is essential for building trust and ensuring patient safety.

A Rapidly Growing Field

The critical role of annotation is reflected in the market's explosive growth. The global medical image annotation market was valued at around $1.2 billion in 2023 and is on track to hit $4.5 billion by 2032. This surge is directly tied to the integration of AI into healthcare, where annotated images are the fuel for training models that can slash diagnostic errors by up to 30% in some applications. You can discover more insights about the medical image annotation market and its projected growth.

This expansion highlights a clear industry consensus: building reliable medical AI begins with an unwavering commitment to data quality. At Prudent Partners, our expertise in AI data annotation services delivers the clinical grade accuracy needed to power the next wave of healthcare innovations.

A Closer Look at Core Annotation Techniques

Before an AI model can learn to interpret medical images, it needs a teacher. Medical image annotation provides that structured education for the algorithm. Different annotation techniques act as different teaching methods, each designed for a specific learning goal. Selecting the right one is essential for building an AI that can perform its diagnostic job with the accuracy patients and clinicians depend on.

These methods range from simply identifying a general area to meticulously labeling every single pixel. The choice always comes down to the clinical question the AI is being built to answer. Let's explore the most common techniques used to train medical AI.

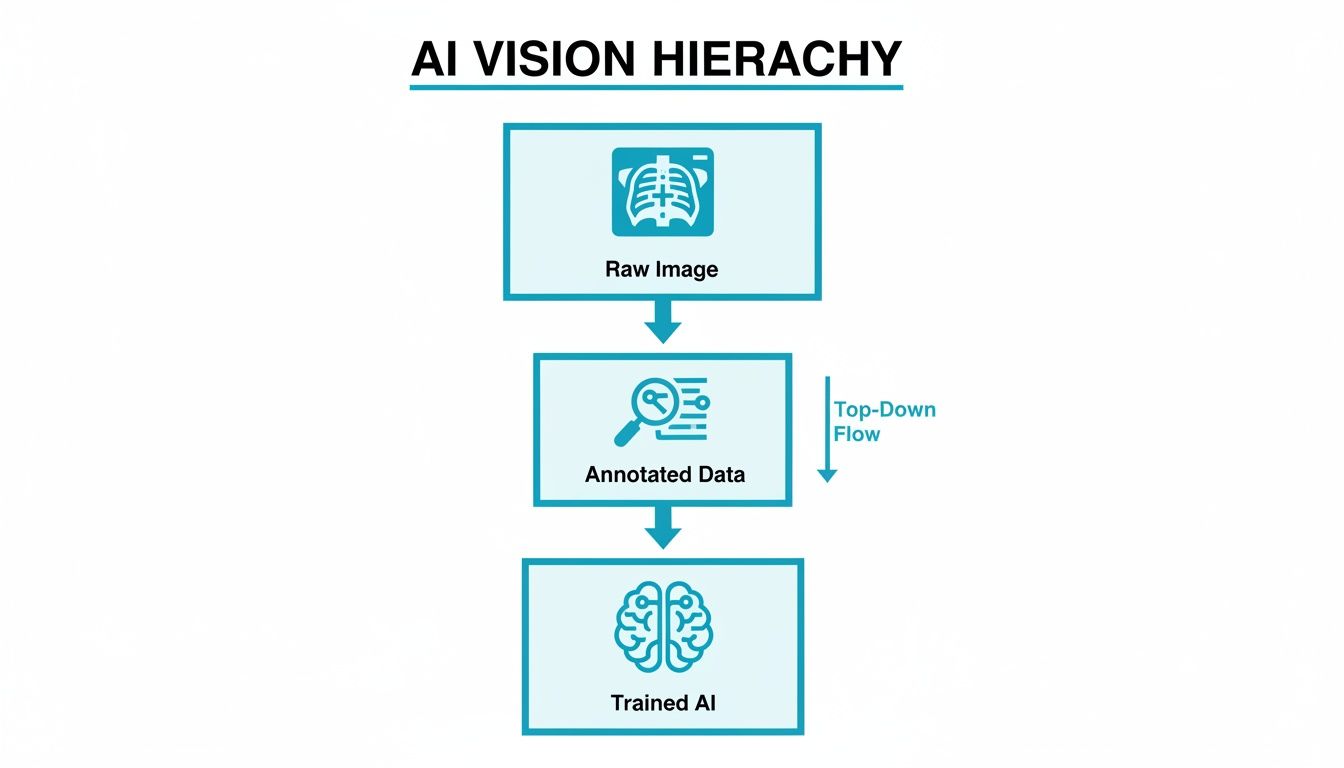

This simple workflow shows how we get from a raw, unlabeled scan to a powerful medical AI. It all starts with turning those images into structured, annotated data, the essential textbook the AI will learn from.

It is a straightforward but critical process. The quality of that "Annotated Data" directly dictates how smart and reliable the final "Trained AI" becomes.

Bounding Boxes For Rapid Localization

The fastest and most straightforward annotation method is the bounding box. It is exactly what it sounds like: drawing a simple rectangle around a region of interest (ROI), like an entire organ or a large, obvious anomaly.

Think of it as putting a frame around the important part of the picture. Bounding boxes are perfect for object detection tasks where the goal is just to confirm the presence and approximate location of something, for example, training a model to quickly find the heart and lungs in a chest X-ray.

However, that simplicity is also a weakness. Since they are always rectangular, bounding boxes inevitably capture background pixels that are not part of the target. This makes them a poor choice for tasks that demand precision, like measuring the exact volume of a tumor.

Polygons For Precise Outlines

When the exact shape of an object is what matters, we turn to polygon annotation. This technique is far more meticulous. It involves placing a series of connected points around the perimeter of an object to create a custom, high fidelity outline.

This method is the go to when the boundary is everything. Common clinical uses include:

- Tumor Delineation: Carefully outlining an irregularly shaped tumor on a CT scan to plan radiation therapy.

- Organ Mapping: Precisely tracing the contours of the kidney or liver for volumetric analysis.

- Lesion Measurement: Accurately defining the borders of a skin lesion in a photo to track its growth.

It takes more time, but the detailed data from polygons teaches AI models about the specific shapes and features of anatomical structures and pathologies.

Semantic Segmentation For Pixel-Perfect Detail

For the absolute highest level of detail, semantic segmentation is the gold standard. This advanced technique does not just outline objects; it assigns a class label to every single pixel in an image. The result is a detailed, color coded map of the entire scan.

Semantic segmentation is like giving an AI model a complete anatomical chart for an image. It does not just see that a tumor is present; it understands which pixels are the tumor, which are healthy brain tissue, and which are cerebrospinal fluid.

This incredible granularity is crucial for the most complex applications. In a brain MRI, for instance, semantic segmentation can differentiate between gray matter, white matter, and a tumor, providing the data needed for sophisticated surgical planning. To dive deeper into this powerful method, you can learn more about the role of segmentation in medical imaging in our detailed guide.

Landmark Annotation For Key Points

Finally, there is landmark annotation, also known as keypoint annotation. This technique is not about outlining areas; it is about marking specific points of interest. These points could be the tip of a vertebra on a spine X-ray or key locations on a joint for orthopedic analysis.

It is all about precise location. Landmark annotation is used heavily in surgical planning and cephalometric analysis in dentistry, where models need to measure exact distances and angles between critical anatomical features.

Comparing Medical Image Annotation Techniques

To make it easier to choose the right tool for the job, here is a quick summary of the common annotation techniques, what they are best for, and the level of precision they offer.

| Annotation Technique | Description | Best Used For (Clinical Example) | Precision Level |

|---|---|---|---|

| Bounding Box | A simple rectangle drawn around a Region of Interest (ROI). | Quickly identifying the location of organs like the heart or lungs on a chest X-ray. | Low |

| Polygon | A series of connected points creating a precise outline of an irregular shape. | Delineating a tumor's exact border for radiation planning or measuring an organ's volume. | High |

| Semantic Segmentation | Assigning a class label (e.g., "tumor," "healthy tissue") to every pixel in an image. | Differentiating tissue types in a brain MRI for surgical planning or volumetric analysis. | Very High |

| Landmark (Keypoint) | Marking specific, individual points of interest on an image. | Measuring angles between vertebrae for scoliosis assessment or planning orthopedic surgery. | High (Positional) |

Each of these techniques provides a different kind of "education" for an AI model. Selecting the right one depends entirely on balancing the need for speed, cost, and the level of detail required to solve a specific clinical problem.

How to Build a Scalable Annotation Workflow

Knowing the techniques is one thing, but applying them consistently across thousands of images is a different challenge. Building a workflow for medical image annotation is not just about picking the right tool; it is about creating a secure, repeatable process that guarantees accuracy and scalability from the first image to the millionth.

This process starts long before a single pixel gets labeled. It begins with a secure foundation for handling sensitive data and creating crystal clear rules for your annotation team. A solid workflow is the operational backbone of your entire AI project, preventing costly errors and ensuring your final dataset is clinic ready.

Stage 1: Secure Data Ingestion and Preparation

Every workflow must start with an uncompromising focus on security. Medical data is incredibly sensitive and protected by strict regulations like HIPAA in the U.S. and GDPR in Europe. The first step is always secure data ingestion, followed immediately by de identification to strip all personally identifiable information (PII) from the images and metadata.

Once the data is secure, it needs to be organized for the annotators. This means sorting images by modality (like MRI, CT, or X-ray), creating manageable batches for specific tasks, and ensuring all files are in a format compatible with your annotation platform. Good preparation here prevents confusion later and keeps the project moving efficiently.

Stage 2: Creating Unambiguous Annotation Guidelines

Clear instructions are the single most important ingredient for consistent, high quality annotations. Before anyone starts labeling, you must develop a comprehensive set of guidelines. This document is the "source of truth" for the whole team, leaving absolutely no room for guesswork.

Your guidelines should nail down the specifics:

- Detailed Definitions: Clearly define every single label and class. For example, do not just say "lesion"; specify the exact visual criteria for a "benign nodule" versus a "malignant lesion."

- Visual Examples: Show, do not just tell. Provide multiple annotated images for each class, including both textbook examples and tricky edge cases.

- Step-by-Step Instructions: Outline the exact process for using the annotation tools. Should they use a certain number of polygon points? How should they handle overlapping structures? Spell it out.

Think of your annotation guidelines as the detailed lesson plan for your AI's education. A vague plan leads to inconsistent learning and an unreliable model. A precise, example rich plan ensures every annotator teaches the AI the exact same lessons, resulting in a predictable and accurate system.

Stage 3: Task Distribution and Pilot Projects

With solid guidelines in hand, it is time to get your team calibrated. But do not just throw the entire dataset at them. Always start with a pilot project. This small scale trial run is incredibly valuable.

A pilot project helps you spot ambiguities in your guidelines, get a baseline for how each annotator performs, and realistically estimate the time and cost for the full scale project. You will quickly see who is fast, who is accurate, and where you need to refine your quality checks before committing serious resources. At Prudent Partners, we insist on this step in our AI data annotation assessment because it sets projects up for success from day one.

Stage 4: Multi-Layered Quality Assurance

For clinical grade data, a single pass of annotation is never enough. A scalable workflow must have a multi layered quality assurance (QA) process baked right in. This creates a system of checks and balances designed to catch errors and enforce consistency.

A battle tested QA workflow usually looks like this:

- Initial Annotation: The first annotator labels the image based on the guidelines.

- Peer Review: A second, equally qualified annotator reviews the initial work, correcting any mistakes or inconsistencies they find.

- Expert Validation: For the most critical tasks, a subject matter expert, like a board certified radiologist, performs a final review and gives the ultimate sign off.

This layered approach systematically drives up data quality at each stage. It also creates a powerful feedback loop, continuously training your annotation team and improving the accuracy of the entire project.

Achieving Clinical-Grade Annotation Quality

In the world of medical AI, "good enough" is never acceptable. The stakes are too high. When an algorithm is designed to help diagnose disease, achieving clinical grade quality in medical image annotation is not just a goal; it is the absolute, unshakeable standard.

This goes way beyond simple accuracy checks. It demands a multi layered strategy to ensure every single label is reliable, consistent, and clinically sound. This commitment is what transforms a promising AI model into a trusted diagnostic tool. Without this rigorous oversight, even the most advanced algorithm can fail, learning from subtle errors that compromise its performance and, ultimately, patient safety.

A Framework for Uncompromising Quality

A truly robust quality assurance (QA) framework is built on three pillars: expert review, consensus based labeling, and hard data from quantitative metrics. Each piece of this puzzle addresses a different aspect of quality, and together, they create datasets that are truly ready for the real world.

The process often starts with a peer review, where one annotator’s work is double checked by another. But for complex medical tasks, the final sign off absolutely must come from a subject matter expert (SME), like a board certified radiologist or pathologist. Their deep clinical knowledge is irreplaceable for validating nuanced annotations that could mean the difference between an accurate diagnosis and a missed one.

The Power of Consensus

Even experts can disagree. Individual opinions can vary, which introduces potential bias into the training data. To solve this, leading annotation providers use a consensus based labeling approach. In this model, multiple qualified annotators label the same image independently.

Their annotations are then compared, and a final "gold standard" label is created from the areas where they all agree. This process is incredibly powerful.

- It minimizes individual bias by averaging out subjective interpretations, leading to a more objective ground truth.

- It flags ambiguous cases for review. When annotators consistently disagree on a specific image, you know it needs a second look from a senior expert.

- It improves the entire team's skills over time through a constant feedback loop.

Measuring What Matters: Key Performance Metrics

While expert eyes provide essential qualitative validation, we also need objective, mathematical ways to measure annotation accuracy. This is where performance metrics come in. Two of the most critical metrics in medical image segmentation are Intersection over Union (IoU) and the Dice Coefficient.

Intersection over Union (IoU): Think of two shapes, one drawn by the annotator and one representing the perfect "ground truth." IoU measures the area where these two shapes overlap and divides it by the total area they cover combined. A score of 1.0 means it is a perfect match.

Dice Coefficient: This metric is very similar to IoU and is also used to gauge how much two samples overlap. It is calculated as twice the area of the overlap divided by the total number of pixels in both shapes. Just like IoU, it is a core indicator of how accurate a segmentation is.

These metrics give you a clear, numerical score for quality. They allow teams to set precise accuracy targets, like an average IoU of 0.95 or higher, and track performance with total clarity. For a deeper dive into establishing these benchmarks, Prudent Partners offers a comprehensive data annotation assessment that helps organizations define their quality standards right from the start.

To really nail this down, it helps to see these metrics side by side.

Key Quality Metrics in Medical Image Annotation

Here is a breakdown of the essential metrics used to measure the accuracy and reliability of annotated medical data. Getting these right is fundamental to training AI models you can trust.

| Metric | What It Measures | Why It's Important for Medical AI | Ideal Score Range |

|---|---|---|---|

| Intersection over Union (IoU) | The overlap between the predicted annotation and the ground truth annotation. | Crucial for segmentation tasks (e.g., outlining tumors). A high IoU ensures the model precisely identifies the region of interest. | 0.90 – 1.0 |

| Dice Coefficient | The similarity between two samples; closely related to IoU but more sensitive to smaller regions. | Heavily used in medical segmentation to evaluate the model's ability to accurately capture the boundaries of organs or lesions. | 0.90 – 1.0 |

| Precision | The fraction of positive predictions that were actually correct (True Positives / (True Positives + False Positives)). | Minimizes false alarms. In diagnostics, high precision means that when the AI flags something, it is very likely to be correct. | > 0.95 |

| Recall (Sensitivity) | The fraction of actual positives that were correctly identified (True Positives / (True Positives + False Negatives)). | Critical for not missing anything important. In cancer screening, high recall ensures the AI does not overlook potential tumors. | > 0.95 |

| Inter-Annotator Agreement (IAA) | The level of agreement between multiple human annotators labeling the same data. | Measures the consistency and objectivity of the ground truth itself. High IAA builds confidence in the dataset's reliability. | Cohen's Kappa > 0.80 |

Ultimately, a combination of these metrics provides a holistic view of your data's quality, ensuring it is robust enough for clinical applications.

An Actionable Quality Checklist

Whether you are working with an internal team or an external partner, you need a simple, actionable checklist to hold every annotation accountable.

- Guideline Adherence: Does every single annotation perfectly align with the project guidelines, including all those tricky edge cases?

- Positional Accuracy: Are polygons, bounding boxes, and keypoints placed with sub pixel precision? There is no room for “close enough.”

- Label Correctness: Is every label assigned to the correct anatomical structure or pathology? No exceptions.

- Completeness: Have all required objects of interest in an image been annotated? A missed lesion is a failed annotation.

- Consistency: Do annotations maintain a uniform style and quality standard across the entire dataset and among all annotators?

By systematically applying this checklist and combining expert oversight with robust metrics, you can build the clinical grade datasets needed to develop safe, effective, and trustworthy medical AI.

How to Choose the Right Annotation Partner

Picking the right partner is one of the most critical decisions you will make on your medical AI journey. The success of your model is completely dependent on the quality of its training data, and the right partner acts as a guardian of that quality. This decision goes far beyond comparing price sheets; it requires a serious look at a vendor’s expertise, security, and ability to grow with you without ever compromising on accuracy.

Choosing a partner is like selecting a co pilot for a critical mission. You need someone who has navigated this exact terrain before, whether that is interpreting complex MRI scans or spotting subtle anomalies on pathology slides. A generic data vendor will not suffice.

Evaluating Domain Expertise and Certifications

The first and most important thing to look for is deep domain expertise. Your potential partner needs to prove they understand the specific medical modalities you work with. Their team should include, or at least be supervised by, professionals with clinical backgrounds like radiologists or pathologists who understand the nuances of the images being labeled.

Beyond their people, look for industry standard certifications that prove their commitment to process and security.

- ISO/IEC 27001: This is the gold standard for information security. It confirms the partner has a formal system for managing sensitive data, which is non negotiable when you are handling patient information.

- ISO 9001: This certification shows a commitment to quality management. It means the partner has established and maintains a process for delivering consistent, high quality work.

- HIPAA and GDPR Compliance: The partner absolutely must have robust, auditable processes in place to guarantee full compliance with healthcare data privacy laws like HIPAA and GDPR.

These are not just logos for a website; they are proof that a vendor takes quality and security seriously. They provide a fundamental layer of trust.

Assessing Quality Assurance and Technology

A partner’s quality assurance (QA) framework is what drives accuracy. A quick, single pass review is nowhere near enough for medical applications. You need a partner with a multi layered QA process that includes peer reviews and, crucially, final validation by subject matter experts. Ask them to walk you through their exact QA workflow.

A partner’s commitment to quality should be transparent and measurable. They should be able to articulate their process for handling disagreements between annotators and how they establish a “gold standard” for your project, ensuring consistency across the entire dataset.

Their technology is just as important. A good annotation platform should be secure, efficient, and able to handle complex medical imaging formats like DICOM. It should also offer clear analytics and reporting, giving you total visibility into project progress, annotator performance, and quality metrics. At Prudent Partners, our expertise in AI data annotation services is built on a foundation of both expert led QA and a secure, transparent technology platform.

The Invaluable Role of a Paid Pilot Project

Finally, never commit to a long term partnership without first running a paid pilot project. A pilot is the ultimate test drive. It is the single best way to validate a vendor's claims before you scale, allowing you to assess their performance on a small but representative sample of your own data.

During a pilot, you can evaluate:

- Communication and Collaboration: How responsive are they? Do they ask the right questions?

- Guideline Adherence: Can they actually follow your specific annotation rules?

- Quality and Consistency: Does the work they deliver meet your accuracy benchmarks?

- Turnaround Time: Can they deliver high quality annotations within the timelines they promised?

This step removes all the guesswork and replaces promises with tangible proof. By investing in a pilot, you ensure the partner you choose is not just another vendor; they are a true extension of your team, ready to deliver the clinical grade data your mission demands.

Common Questions About Medical Image Annotation

When you are diving into medical AI, a lot of questions come up. AI teams, data scientists, and healthcare innovators often ask us about compliance, cost, timelines, and tools. Getting straight answers is key to building a project that actually works in the real world.

Here are some of the most common questions we get, with direct answers to help you finalize your strategy.

How Long Does a Medical Image Annotation Project Take?

This is the classic "it depends" answer, but for good reason. There is no one size fits all timeline because every project is unique. A small pilot with a few hundred images needing simple bounding boxes could be completed in a few weeks.

However, a large scale project, say, semantic segmentation on thousands of multi slice DICOM images, is a different story. That could easily take several months, or even longer.

The timeline really boils down to four factors:

- Project Scope: The sheer number of images is the biggest driver. More images mean more time.

- Annotation Complexity: Drawing simple boxes is fast. Pixel perfect semantic segmentation is not.

- Quality Assurance Protocol: A multi layered review process with peer checks and expert validation adds time, but it is absolutely essential for clinical grade accuracy.

- Team Expertise: An experienced team that already knows the tools and the specific medical modality will always be more efficient.

What Is the Cost of Medical Image Annotation?

Just like the timeline, cost is variable. The price tag depends on the same core factors: scale, complexity, and the level of expertise required. Labeling a standard chest X-ray is a world away from segmenting a complex 3D brain MRI, and the cost reflects that difference.

A key cost driver is the level of human expertise involved. Projects requiring board certified radiologists for final validation will naturally have a higher cost than those that can be handled by trained, non clinical annotators. However, this expert oversight is an investment in data quality that pays dividends in model performance and reliability.

For instance, a high volume project using simple bounding boxes might be priced per image or even per annotation. A more intricate task like polygon annotation or semantic segmentation is almost always priced per hour because of its detailed, time consuming nature.

Our advice? Always start with a pilot project. It is the best way to get a precise, data driven cost estimate for your specific needs.

How Do You Ensure Patient Data Privacy?

This is not just a best practice; it is a strict legal and ethical mandate. The entire medical image annotation workflow must be built on a foundation of rock solid security and compliance with regulations like HIPAA and GDPR.

The process starts with thorough data de identification. All personally identifiable information (PII) has to be scrubbed from the images and metadata before a single annotation is made. After that, a secure annotation platform is non negotiable. It needs to have features like access controls, encryption, and audit trails to track every action.

If you are working with an external partner, always verify their security certifications, like ISO 27001. Make sure they sign a Business Associate Agreement (BAA) to legally guarantee their commitment to protecting patient data.

How Is the Market for These Tools Evolving?

The market for medical image annotation tools is growing incredibly fast. This is no surprise, given the explosion in medical imaging procedures and the wider adoption of AI in healthcare. The industry is clearly moving toward more sophisticated and efficient annotation solutions.

In the United States alone, the healthcare data annotation tools market hit $65.3 million in revenue in 2023. It is projected to reach $330.4 million by 2030, growing at an impressive CAGR of 26.1%. This boom is directly tied to the surge in imaging procedures like X-rays, MRIs, and CT scans, which have increased by over 20% annually.

Hospitals and diagnostic centers are the main drivers here. They rely on these tools to manage workflows and boost efficiency, with some platforms automating up to 70% of routine tasks. You can read the full research about healthcare data annotation tools to get a deeper look at the market dynamics. This trend underscores the critical need for advanced tools that can handle both scale and complexity while maintaining clinical level precision.

Your AI initiative deserves a data partner that understands the high stakes of healthcare. At Prudent Partners, we deliver clinical grade medical image annotation with an unwavering commitment to quality, security, and scalability. Connect with us to discuss your project and discover how our expert led services can accelerate your path to building safe and effective medical AI. Learn more at https://prudentpartners.in.